Neet to pitch this at the lowest possible cost

Couldn’t stress it enough, except for a few borderline cases Adaptive Bitrate is simply a must have. Your viewers need to start fast and be able to watch the game on poor or fluctuating networks.

Revisiting the topic as there are ever so many angles to approach it. For this one, (unsurprisingly) cost was the primordial factor and had to pull all the tricks to get it that cheap.

In no particular order, and not necessarily to be used all together (actually some are incompatible), following are tactics to reduce the cost of an ABR setup:

Reduce the number of ABR renditions

The main point of ABR is to allow bandwidth-challenged viewers to play your content smoothly, may that be at a lower quality. The more renditions employed, the closer you will be able to match one’s capabilities (it’s at times not just bandwidth but also decoding horsepower and video canvas resolution) and offer them the best possible viewing experience.

On the other hand, having fewer renditions may lock some users into a less than ideal quality setting, yet still fluid, of reasonable visual quality, and with good audio. Especially if your content or programming does not mandate top quality (i.e. news), this may save a lot on transcoding in the long run.

Add to that, much of the public has grown to instinctively realize a better network will lead to better quality and will make voluntary efforts to better their connection if they want a better video.

Recycle the original encode

This won’t work for all scenarios but…

Particularly when source feed is encoded by known studio equipment (unlike user contributed which tends to be less predictable) you can transmux the original video and make it the highest quality variant in the ABR set. Depending on the profile, this may save up to half the overall processing power needed for transcode.

Recycle the original audio

Simple, just mandate a middle ground audio bitrate/quality at the source and use it for all renditions. Not always ideal for audiophiles but good enough for us humans.

Use lesser complex transcoding

Encoding is a fine trade-off between quality, bitrate (which translates in bandwidth required for transport) and processing needs. In the special case of live streaming, the transcoding device has to offer enough power to process the content in real time. Choosing a less complex transcoding profile, while requiring less computing resources, will lead to video that has a higher bitrate for the same quality, or lower quality for the same bitrate. Sure thing, traffic costs too, and it may be unwise to save pennies on one transcode and pay for the extra traffic multiplied by the number of viewers. Yet every case is different and numbers may be in favor of this approach at times.

Use the GPU

Modern GPUs have had built-in dedicated video encoders for quite a while now and they can be put to good use in many scenarios. Just off the top of my head, you’d be able to transcode 2-4 times as cheap, real number depends on a huge amount of factors, most notably the cost of actually buying or renting the respective GPUs

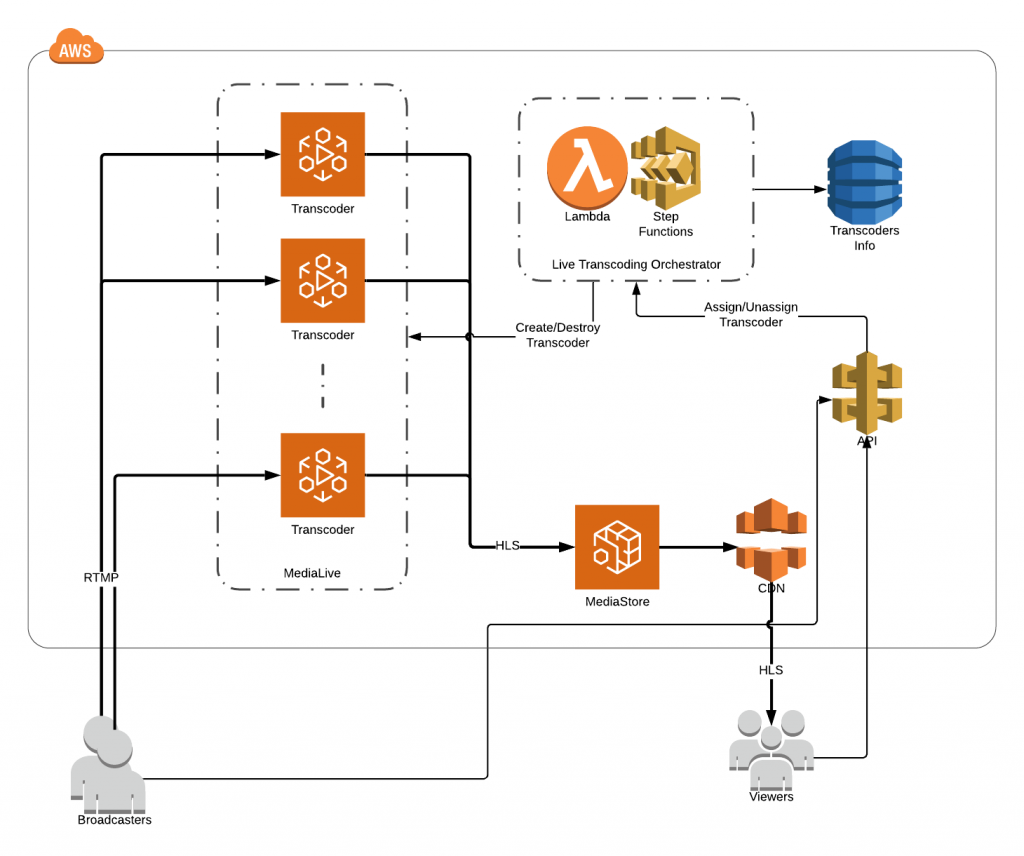

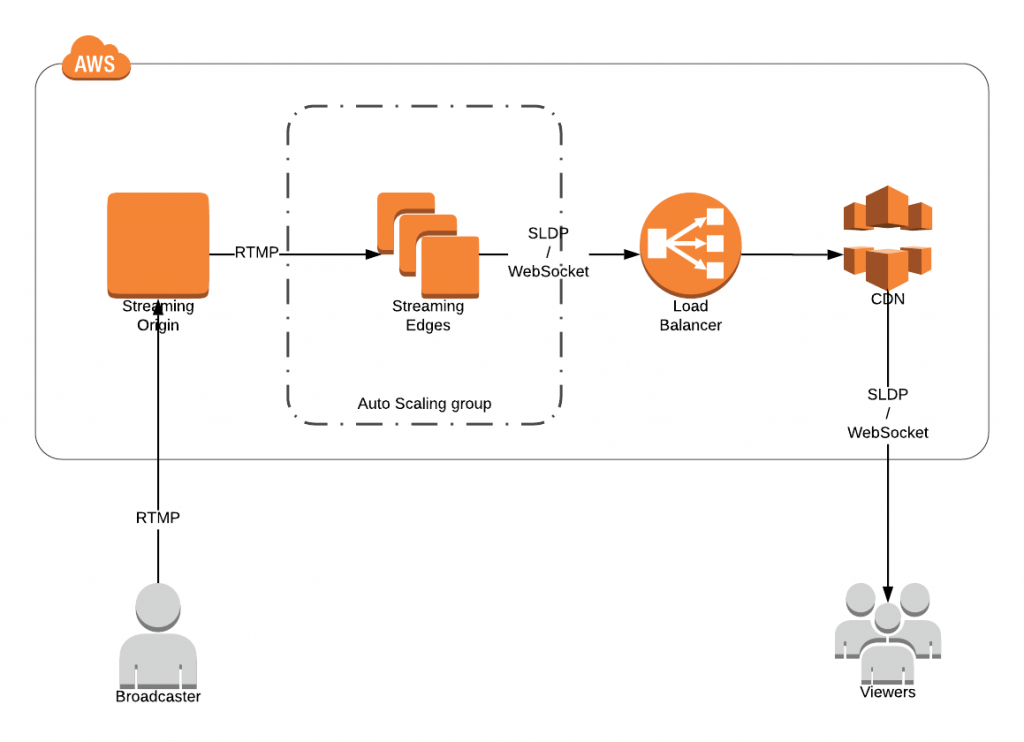

Use the cloud

That’s a no brainer nowadays, I guess. Even if you have some idle dedicated servers lying around, it would be hard to set up a scalable solution around them. Between SaaS cloud transcoding and running custom software on cloud virtual servers, the latter is cheaper by far though it comes with extra headaches.

Use free software

Duh, doesn’t get any cheaper than that. You don’t get to call support when something goes wrong, but hey, maybe your team is too good to ever need that. Encoders in ffmpeg and gstreamer (i.e. x264) are hardly inferior to their commercial counterparts and also mature and stable, so no real worries there, most software transcoders are built on top of them anyway.

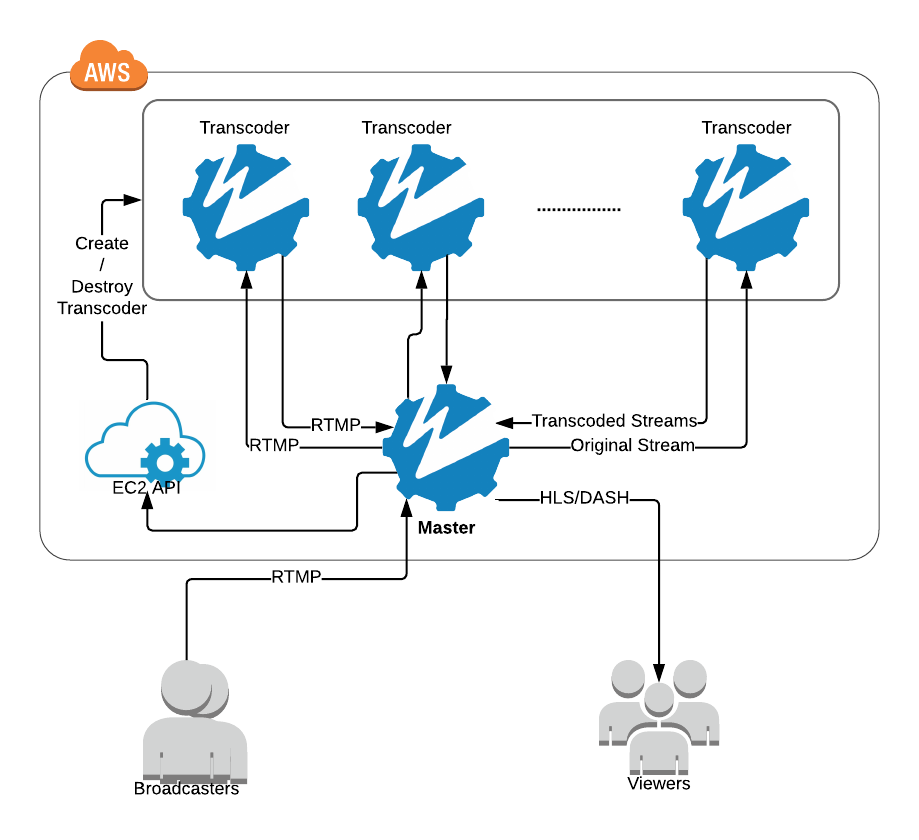

Use a separate virtual server for every stream

That’s not necessarily a winner for every scenario. In fact it’s always more economical to be doing multiple transcodes on a more powerful machine. But that’s only if you can use that to full capacity, otherwise you’re as efficient as flying a large plane half empty.

Take advantage of the Launch Credits

This one is very close to a hack. Only particular to AWS, the older virtual server types (since the days they billed by the hour) will let you burst some cheap CPU credits and throw the instance away when depleted. There’s a limit to how much you can abuse the ‘feature’ but good enough to get you started at a real bargain.

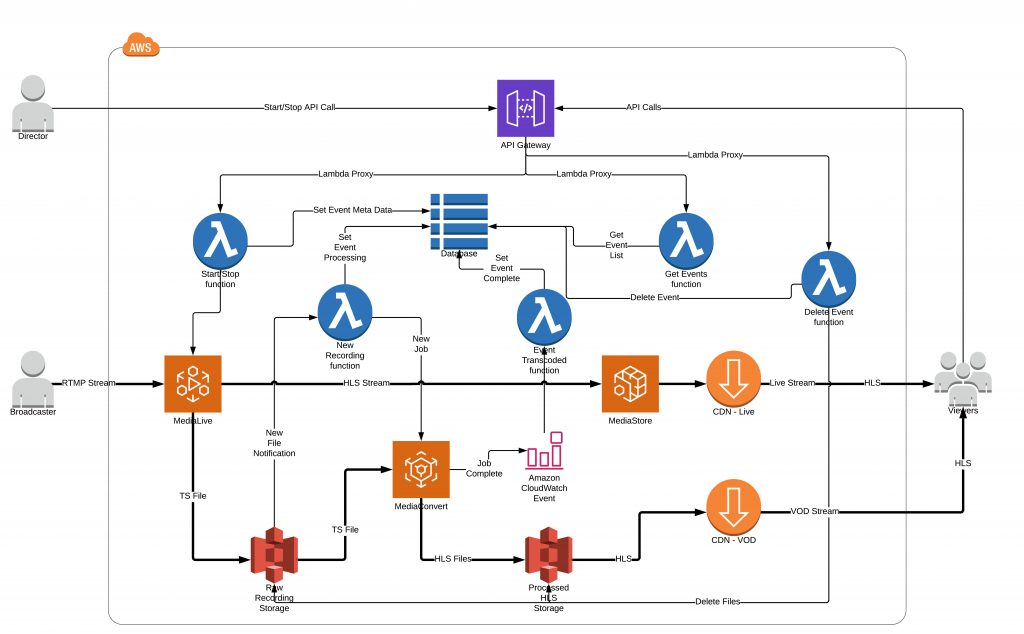

Putting it all together (actually just some) …

…the solution is here for grabs. Deploys in a few clicks and sets you up with a rather generous ABR profile for as little as 2-5¢ (!!!) per hour of live streaming or well within the free tier if you still have that.

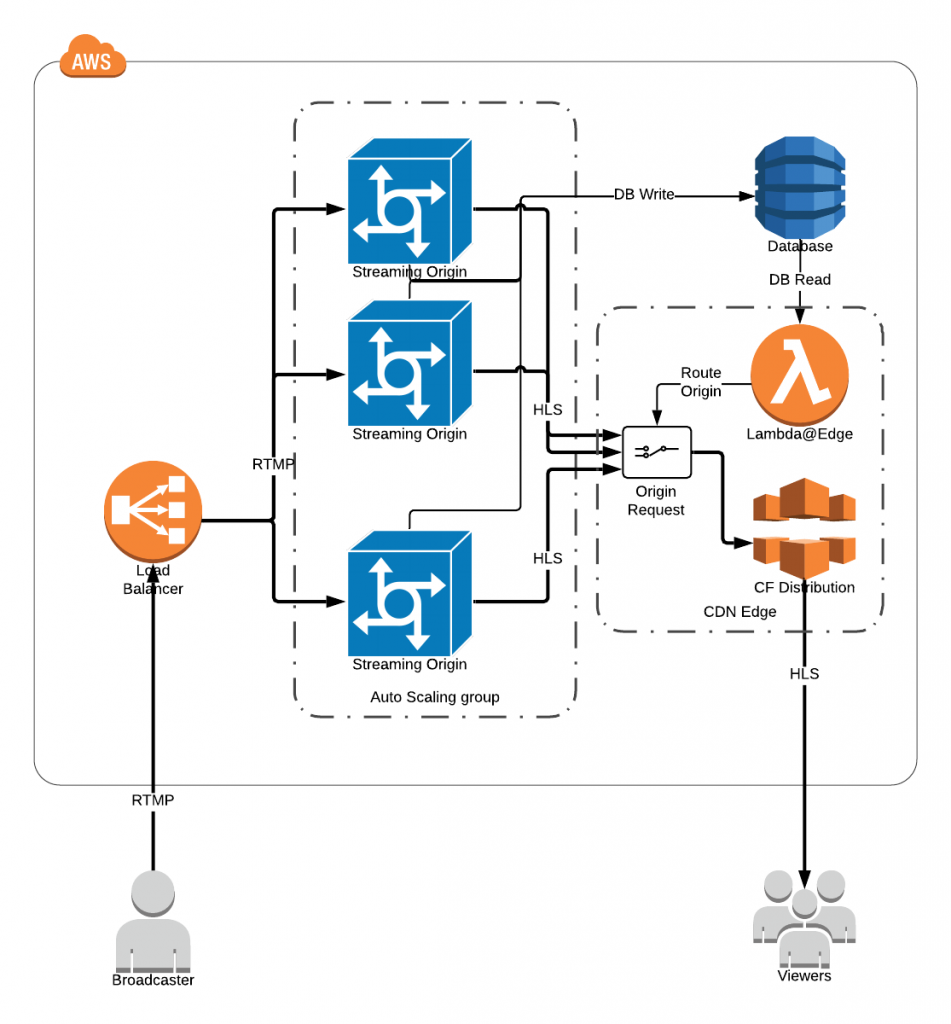

Does it scale?

Not in all directions. Long story short, you can stay on the cheap end of the spectrum if you transcode up to a couple hundred hours of content per day, after that the perks start to run out.

Is it worth it?

Oh yess! If only I could pocket the savings it’s brought…

Is it stable/reliable?

Should be. For a while I monitored it in production and noticed no issues. See for yourself.

Is this blog sponsored by AWS?

No. And by no other company for that matter. I just happen to have been exposed to amazon’s much more than to others’, but (except perhaps for very specific use cases) do not believe it’s any better than other cloud platforms. Will gladly take on the challenge to deploy solutions in any environment or to objectively choose one that best suits particular needs.