My videos are buffering…

It almost comes as a reflex these days, when a client tells you their video website is buffering a lot, to let them know they need Adaptive Bitrate. That’s the easy part. As the next logical questions are how long it would take and how much does it cost, I’m sure they all love to hear that “Well, it depends…”

And it does quite a bit, you need to decide where and how to transcode your content, where to store it, what format to store and distribute it in, what player to use, and so on.

If low cost is key, your video library is small, and you have a spare computer you can keep busy transcoding for a few days, then you may like the simple solution here. It has been deployed (with adjustments) to a handful of clients, of which Pierre was kind enough to let us share the code, implement details and some usage statistics after running for almost 2 years.

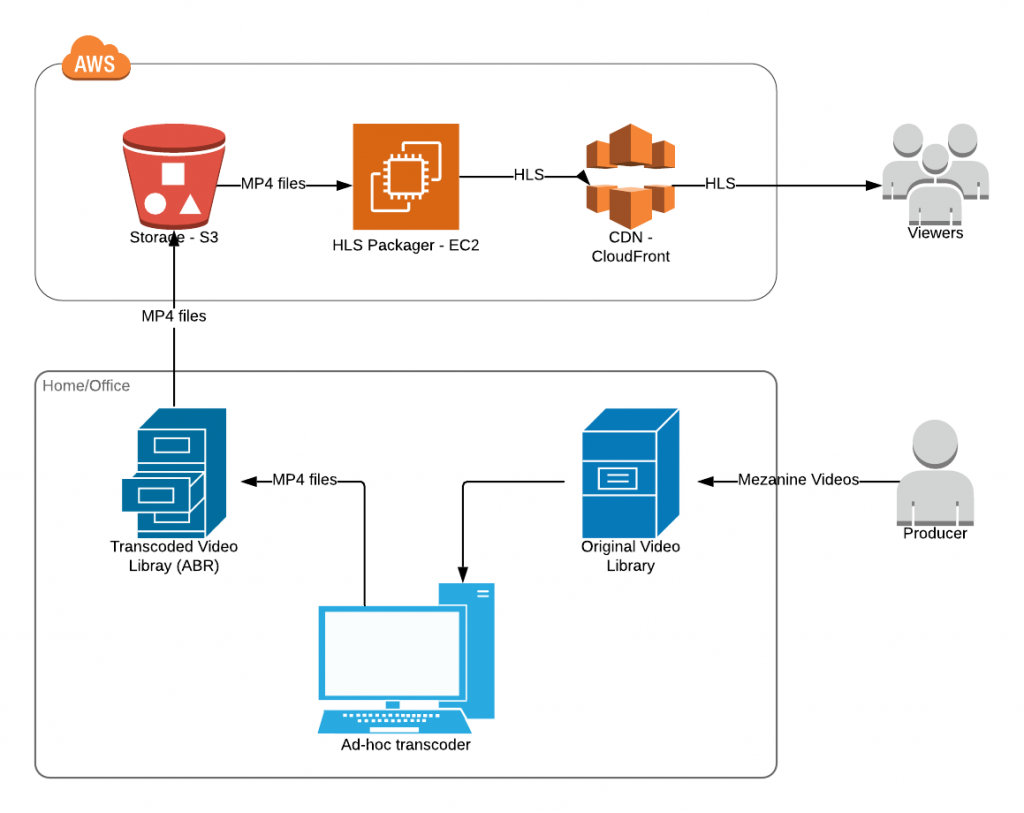

Setup is meant to be deployed in AWS, however components can be adapted to other clouds or a dedicated infrastructure. Setting up a CDN is optional.

Content will reach your viewers via the HLS protocol. Compared to its more agile friend MPEG-DASH, it has been around for longer and it has better support of free and low-cost players.

The scripts here will transcode your videos to 6 quality variants. They work on both Windows and Mac/Linux and will also generate the HLS manifest. Just put all your files in the originals folder and run the .cmd or .sh script. And wait…

Rather than transcoding directly to HLS, the quality variants will be .mp4 files. That is to not have you upload (hundreds of) thousands of files to S3, and also save you the storage cost of the HLS overhead (up to 15%). Transmuxing to HLS is to be performed on the fly by a nginx-vod-module set up between S3 and the CDN. There’s costs to this of course, but it runs just fine on a nano(!) EC2 instance.

At a glance, the setup looks like this:

Does it scale?

Viewer-wise, yes. There’s no limit to how many can watch, and no limit to how many can watch at the same time. There are 4 levels of caching (CloudFront, the VOD module’s, and Nginx’s own 2 levels), which ensure that the tiny EC2 instance has little chance of getting overwhelmed.

Content-wise, not really. A 15 minute video takes some 3 hours to process on a regular computer(!). If you only need to upload 1-2 videos a week that’s no big deal. If you need more, processing time can be improved with trade-offs, but just by a 2-3 fold; consider cloud transcoding if that’s not good enough for you.

Is it stable?

So it looks like. Pierre’s Nginx has been running nonstop since May 2017 with no restart or upgrade (although that may come in useful I guess). His library is only some 200GB but I dare say the setup can easily take ten times as much. Nginx elegantly caches the video files in chunks (i.e. via range requests) while CloudFront will just cache the HLS manifests and segments. This ensures that the most popular parts of the most popular items get cached prioritarily, to save resources and protect the system from overloading.

The only thing that would bring it to a crawl is the scenario where a lot of people suddenly start to watch a lot of different pieces of content at the same time. But as content gets added gradually, that’s highly unlikely. Just in case your site becomes viral, you can upgrade the EC2 instance in 2 minutes or so, with just partial downtime for clients. 🙂 And if that’s not enough because you’re instantly watched by many millions, you may clone that as part of an Auto Scaling Group.

How cheap is it exactly?

You’d be paying some $45/year for an on-demand t3.nano instance, and you can easily cut that in half by purchasing a reservation.

S3 storage costs some $0.28 per GB per year. You’ll be storing about 3.5GB for every hour of content.

CDN traffic will be the bulk of your cost, and that’s hard to estimate. Depends on viewer count, their location and bandwidth capabilities. You can get a free 50 GB/mo for the first year though, as part of their free tier.

There’s also some internal traffic to count, but expect that to be negligible (say under $1/year). It’s the traffic on the S3 to EC2 and the EC2 to CloudFront routes, but these are both cached so you’re safe.

And that’s it.