“We have idle cores everywhere”

Live transcoding for ABR typically means one encoder per stream, provisioned to handle the full ladder. Whatever compute you have elsewhere (idle containers, a staging environment doing nothing at night, CI runners between builds) stays out of it. Not because it can’t transcode, but because the architecture was never built to ask.

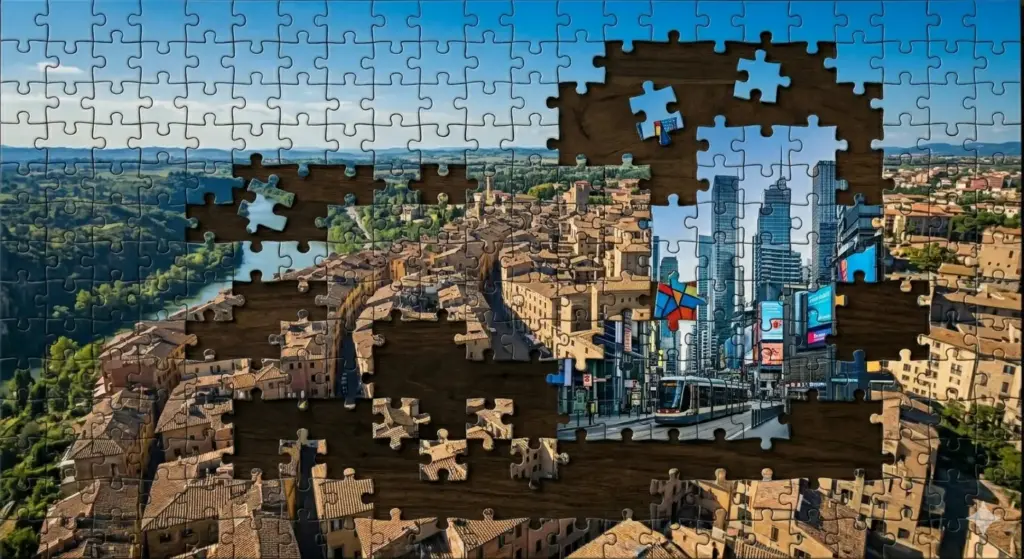

The usual approach is to transcode the full stream and then segment for delivery. This does it the other way: segment first, then transcode. A segment is already a self-contained video. It can be played, transcoded, and slotted back in without touching anything around it. Transcode workers don’t need to know about each other, or about the stream. They just need a source video and transcoding settings.

The pipeline is simple. Source stream in, segmenter out. A manager watches the resulting HLS playlist and probes each segment to decide what renditions to produce. Every segment, every rendition below the original, becomes a standalone job on a queue. Workers pull, transcode, report back. The manager rebuilds the playlists. The original quality passes through.

But wait…

What if there isn’t enough capacity?

Two angles here. First: nothing stops you from adding dedicated capacity on top of the spare compute. An autoscaling group that spins up workers when the queue grows is the obvious setup. The spare compute handles the baseline, the autoscaler handles the spikes.

But autoscaling takes time to kick in, sometimes fails, and costs money. So the system has a second answer: graceful degradation. When workers can’t keep up, the manager doesn’t just drop jobs randomly. It prioritizes lower renditions (which are cheaper to transcode and cover most viewers) and lets the higher ones go. Say the source is 1080p. The ladder might be 720p, 480p, 360p, and 216p, all transcoded, plus the original passed through as-is. Under load, 720p is the first to go, then 480p. The original stays because it’s never transcoded. The smallest renditions survive because they’re cheapest to produce and get priority. What temporarily disappears are the renditions in between. When capacity comes back, the full ladder resumes.

This means you can run the system with no autoscaling at all if you accept occasional quality drops. Or you can use graceful degradation as a bridge while new instances come online. Or both.

Segmenting first doesn’t sound like a great idea, what’s the catch?

Segmenting before transcoding has a cost. A traditional encoder sees the full stream: it can look across segments for scene changes, distribute bitrate over time, and make global decisions about quality. When each segment is transcoded independently, that context is gone. Each segment starts fresh. Too short and the compression loss shows. Too long and the delay grows. 10 seconds turned out to be the sweetest spot.

There’s also the overhead of distribution. Every job means a storage download, a process spawn, disk I/O, a storage upload, and a callback. A traditional encoder runs continuously and handles everything in memory. Here, every segment pays for process creation and network round trips. At scale, storage bandwidth and queue throughput become line items worth watching.

Try it

A proof of concept is available here. You can have it running in minutes.

Does it scale?

Gracefully. Adding capacity means adding workers. They don’t need to know about each other, about the manager, or about the stream. They poll a queue, pick up a job, and post the result. You can start with one and scale to as many as you need. The queue is the only coordination point.

Is it stable?

The system recovers well. Renditions drop and come back as capacity fluctuates, and the playlists rebuild automatically. The catch is on the player side. Most HLS players load the master manifest once and stick with whatever renditions they saw at the start. If a rendition disappears and comes back, the player may not notice. In practice, this has been mostly played through video.js (powered by VHS), which reloads the master manifest when a rendition stalls. That makes it resilient enough. Other players are still expected to do good if degradation is minimal.

How cheap is it?

The whole point is that the compute is already there. The queue cost is negligible. What you save is the dedicated transcoding bill, whether that’s a managed service or purpose-built instances. The savings depend on how much idle capacity you have, but even a small pool goes a long way when the work is split this fine.

What about latency?

This is not a low-latency solution. Segmentation happens before transcoding, so you’re at least one full segment behind before a worker even picks up the job. Shorter segments would help with latency, but fragment the worker pool too much, so 10-second segments are a reasonable default. Expect at least 30 seconds of end-to-end delay, but plan for a minute. For most live streaming that’s fine. For real-time interaction, look elsewhere.

Is it worth it?

For the right setup, absolutely. If you have idle compute, streams that tolerate delay, and viewers that tolerate (or don’t notice) infrequent imperfect ABR, this replaces a significant transcoding bill with next to nothing. For everyone else, a managed service is less to think about. The gap between the two is operational maturity, not technology.